Guangkai Xu 徐光锴

State Key Lab of CAD & CG, Zhejiang University

|

|

Biography

I'm currently a third-year Ph.D. student of the College of Computer Science and Technology at Zhejiang University, advised by Prof. Chunhua Shen and Hao Chen. Before that, I received my M.S. degree from the Department of Automation, University of Science and Technology of China (USTC) in 2023, where I was a member of USTC-BIVLab, advised by Prof. Feng Zhao, and my B.E. degree from the University of Electronic Science and Technology of China (UESTC) in 2020.

My research interests include Embodied AI with VLMs, MLLMs, and Visual Perception.

Awards

- 1st place in the 2nd Monocular Depth Estimation Challenge (CVPR 2023 Workshop).

- 2nd place in the Streaming Perception 2D Image Detection Competition (CVPR 2021 Workshop).

- 3rd place in the UG2+ Low-Light Face Detection Competition (CVPR 2021 Workshop).

- National Second Prize, NXP Cup National College Student Intelligent Car Competition (2018).

News

- [2026.02] One paper was accepted by CVPR 2026.

- [2025.06] One paper was accepted by ICCV 2025.

- [2025.03] One paper was accepted by SIGGRAPH 2025.

- [2025.01] One paper was accepted by ICLR 2025.

- [2024.12] One paper was accepted by AAAI 2025 (Oral).

- [2024.09] One paper was accepted by NeurIPS 2024.

- [2024.01] One paper was accepted by ICRA 2024.

- [2023.07] One paper was accepted by ICCV 2023.

Publications

* indicates equal contribution (co-first authors). † indicates corresponding author.

♠ (Co-) First author Papers

CVPR 2026

CVPR 2026

|

Unlocking the Power of Critical Factors for 3D Visual Geometry Estimation

Guangkai Xu*, Hua Geng*, Huanyi Zheng, Songyi Yin, Yanlong Sun, Hao Chen, Chunhua Shen† CVPR, 2026 We identify the critical factors behind 3D visual geometry estimation and show how explicitly modeling them leads to stronger and more reliable geometry predictions.

|

ICLR 2025

ICLR 2025

|

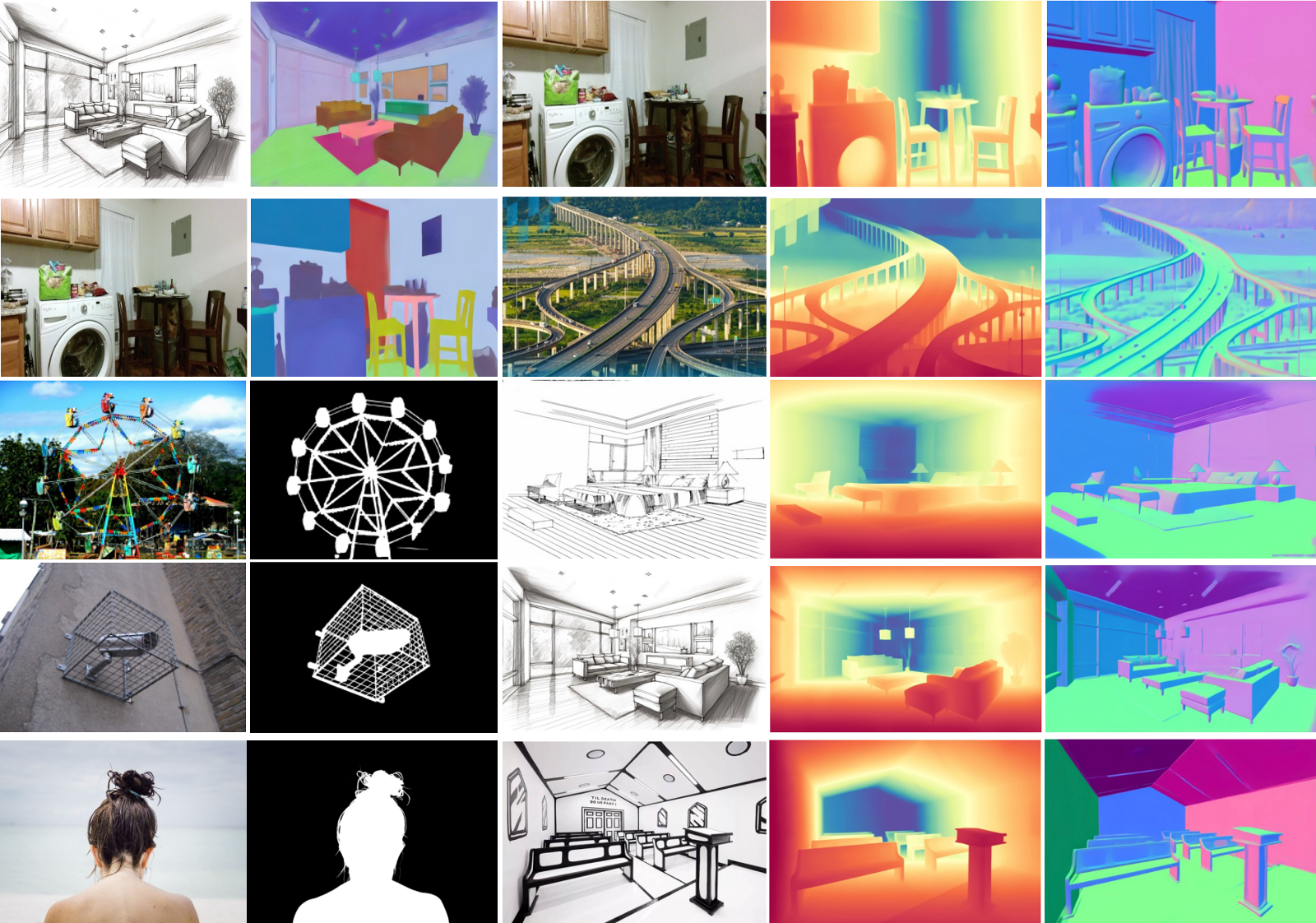

What Matters When Repurposing Diffusion Models for General Dense Perception Tasks?

Guangkai Xu, Yongtao Ge, Mingyu Liu, Chengxiang Fan, Kangyang Xie, Zhiyue Zhao, Hao Chen, Chunhua Shen† ICLR, 2025 [PDF] [Code] Instead of running many diffusion steps at test time, the method fine-tunes a deterministic one-step model for dense prediction, making diffusion-based perception simpler and more efficient.

|

ICCV 2023

ICCV 2023

|

FrozenRecon: Pose-free 3D Scene Reconstruction with Frozen Depth Models

Guangkai Xu*, Wei Yin*, Hao Chen, Chunhua Shen, Kai Cheng, Feng Zhao† ICCV, 2023 [PDF] [Code] [Homepage] FrozenRecon keeps a pre-trained depth model fixed and only optimizes a small set of geometric correction parameters, offering a practical way to build coherent 3D scenes from ordinary video.

|

AAAI 2025

AAAI 2025

|

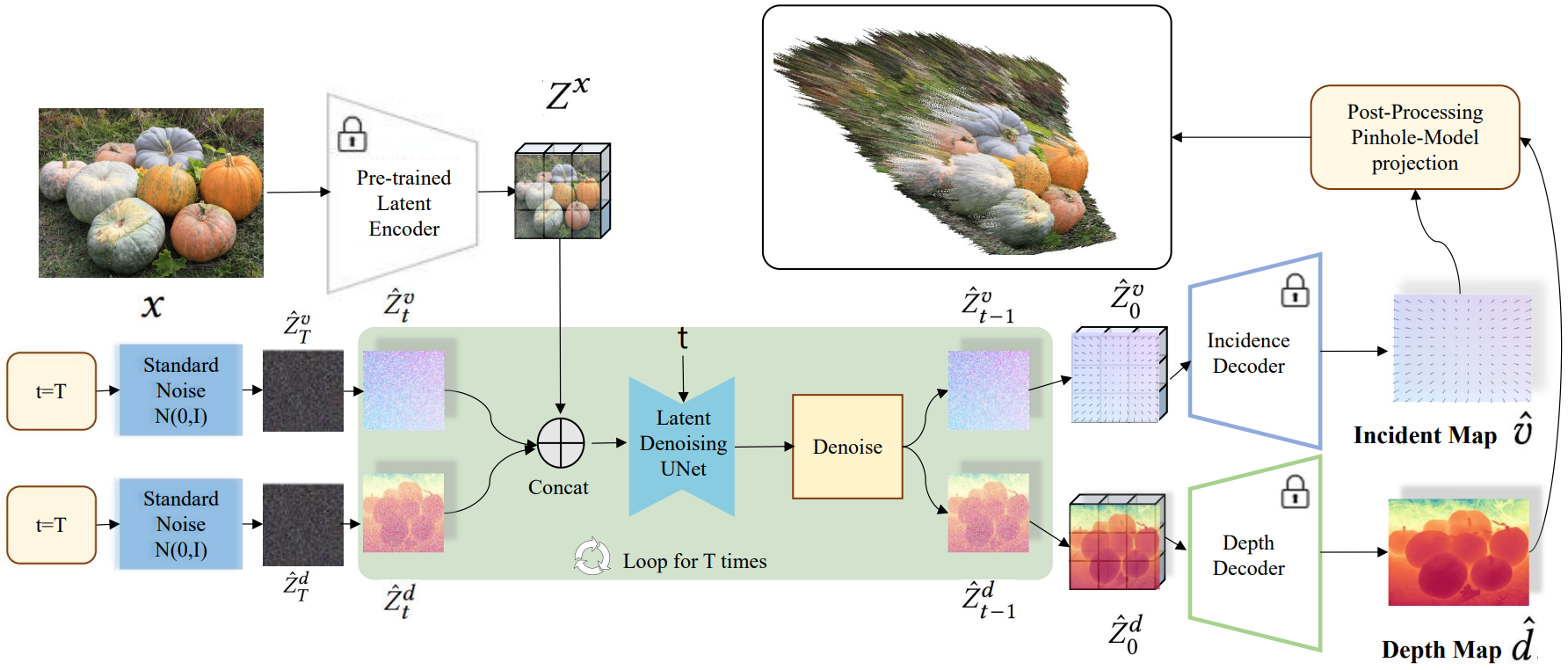

DiffCalib: Reformulating Monocular Camera Calibration as Diffusion-based Dense Incident Map Generation

Xiankang He*, Guangkai Xu*, Bo Zhang, Hao Chen, Ying Cui, Dongyan Guo† AAAI, 2025 (Oral) [PDF] [Code] DiffCalib predicts a dense incident map and depth from one RGB image, then recovers camera intrinsics with RANSAC, making single-image calibration more reliable in everyday scenes.

|

MIR 2024

MIR 2024

|

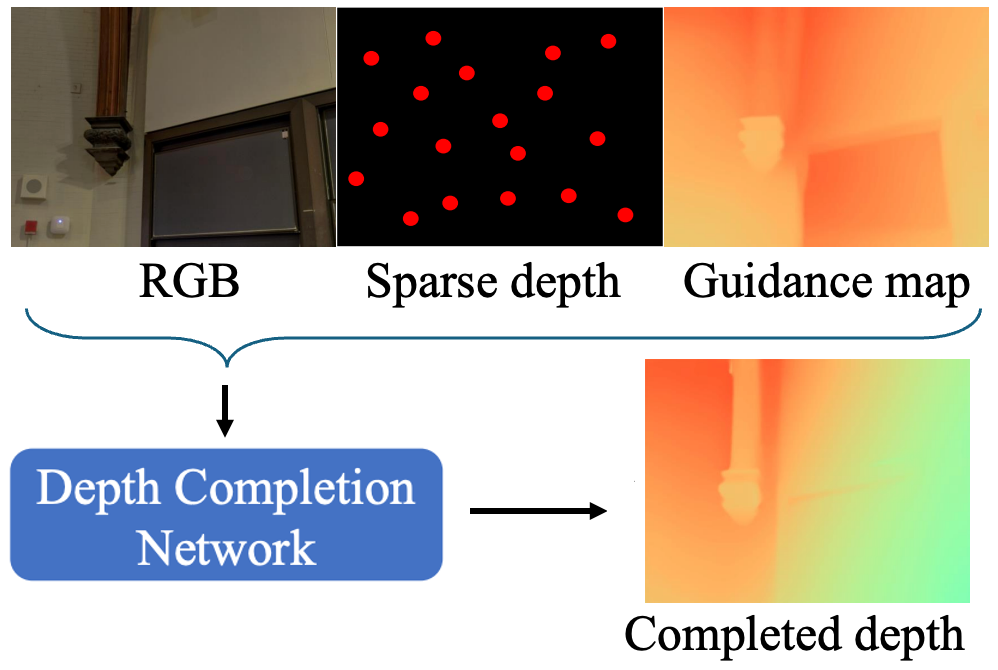

Towards Domain-Agnostic Depth Completion

Guangkai Xu*, Wei Yin*, Jianming Zhang, Oliver Wang, Simon Niklaus, Simon Chen, Jia-Wang Bian† Machine Intelligence Research (MIR), 2024 [PDF] A single model uses image-based depth estimates to fill missing regions in sparse and noisy depth maps, improving robustness when depth comes from varied sensors and real-world conditions.

|

arXiv 2022

arXiv 2022

|

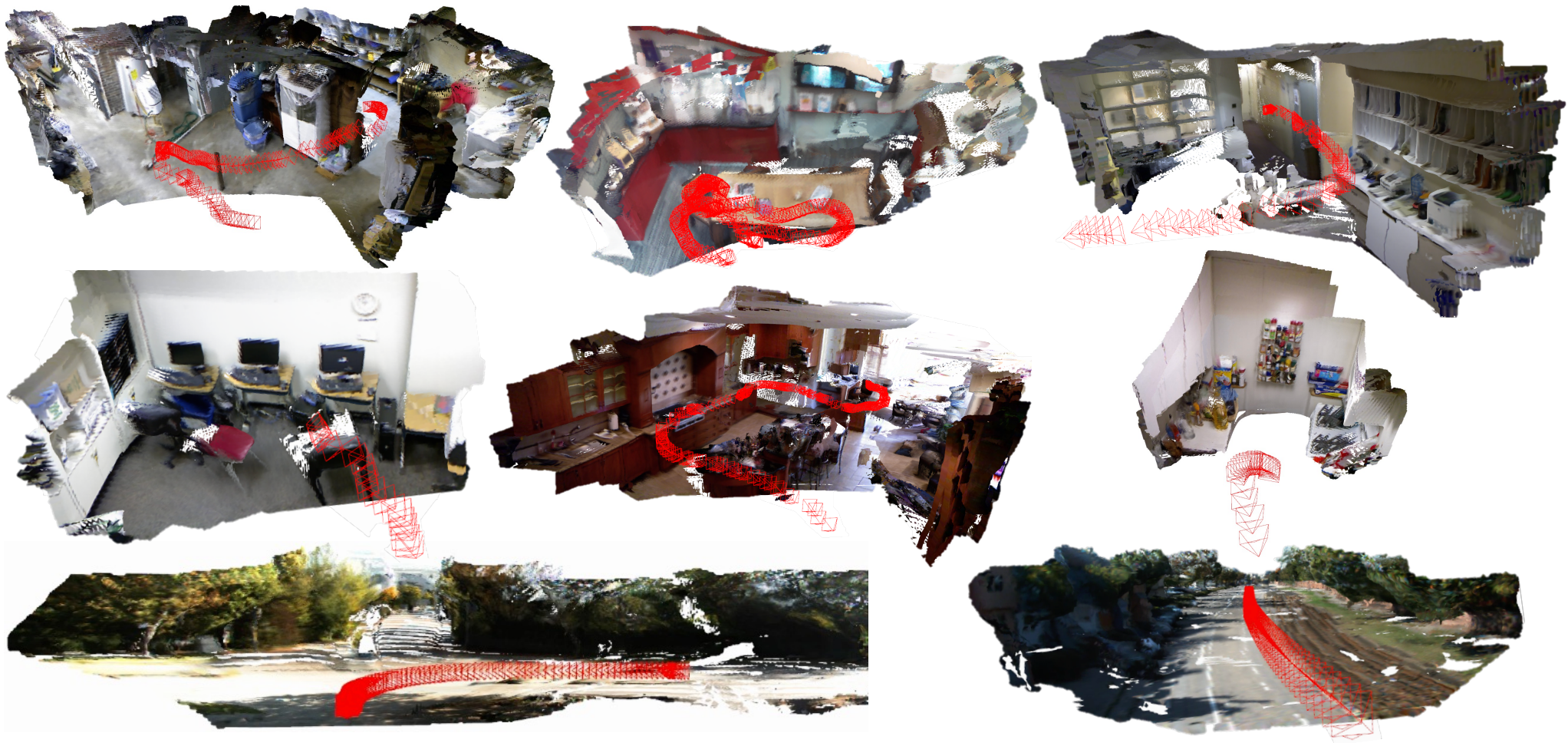

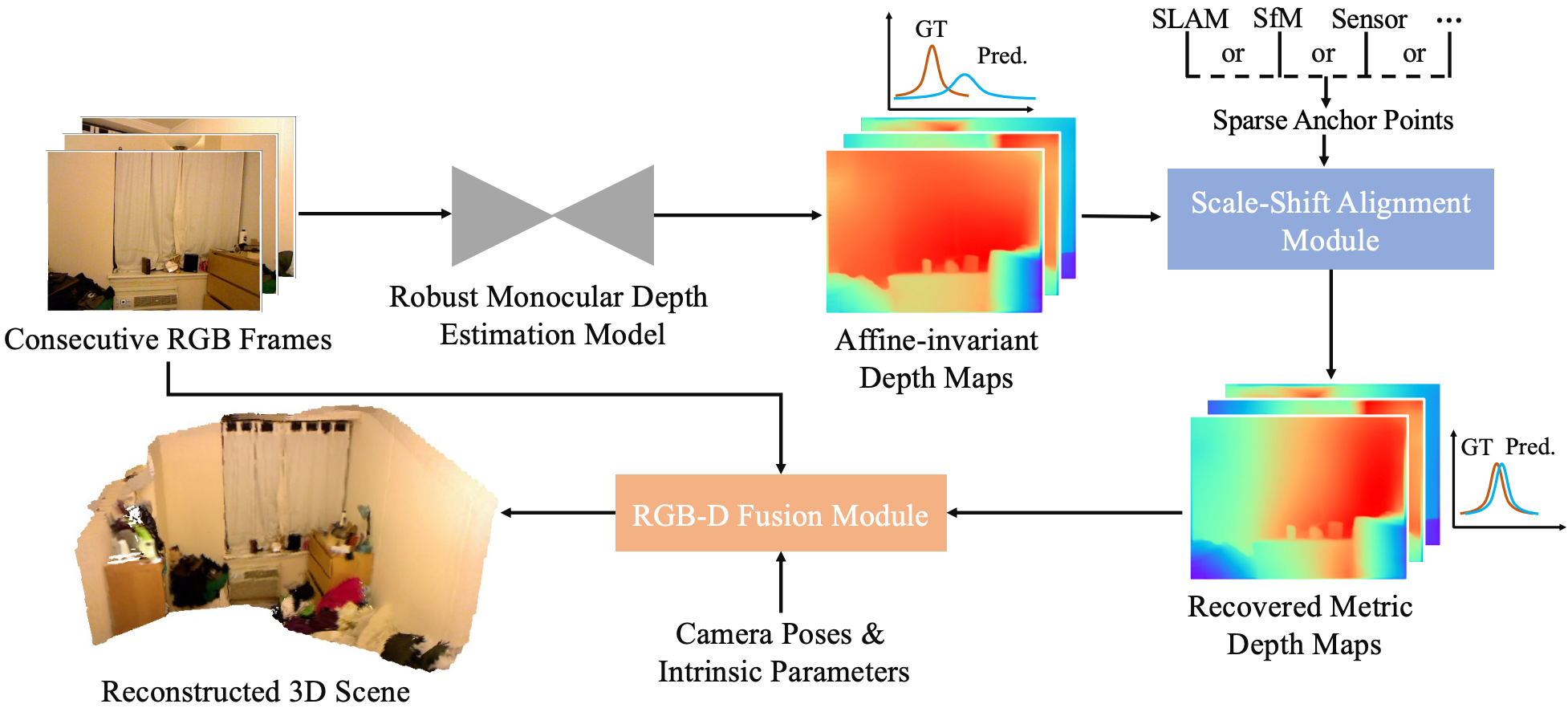

Towards 3D Scene Reconstruction from Locally Scale-Aligned Monocular Video Depth

Guangkai Xu*, Wei Yin*, Hao Chen*, Chunhua Shen, Kai Cheng, Feng Wu, Feng Zhao† arXiv, 2022 [PDF] Local scale-and-shift recovery from sparse anchor points turns monocular video depth into consistent metric geometry for accurate dense 3D scene reconstruction.

|

♠ Co-author Papers

SIGGRAPH 2025

SIGGRAPH 2025

|

Generative video matting

Yongtao Ge, Kangyang Xie, Guangkai Xu, Li Ke, Mingyu Liu, Longtao Huang, Hui Xue, Hao Chen, Chunhua Shen† SIGGRAPH, 2025 [PDF] A pre-trained video diffusion model is repurposed for matting so the system can preserve fine structures like hair while keeping predictions coherent over time.

|

ICCV 2025

ICCV 2025

|

POMATO: Marrying Pointmap Matching with Temporal Motion for Dynamic 3D Reconstruction

Songyan Zhang*, Yongtao Ge*, Jinyuan Tian*, Guangkai Xu, Hao Chen†, Chen Lv, Chunhua Shen ICCV, 2025 [PDF] [Code] POMATO learns 3D geometry and cross-view matching together, so it can reconstruct dynamic scenes while also estimating motion masks and tracking points over time.

|

NeurIPS 2024

NeurIPS 2024

|

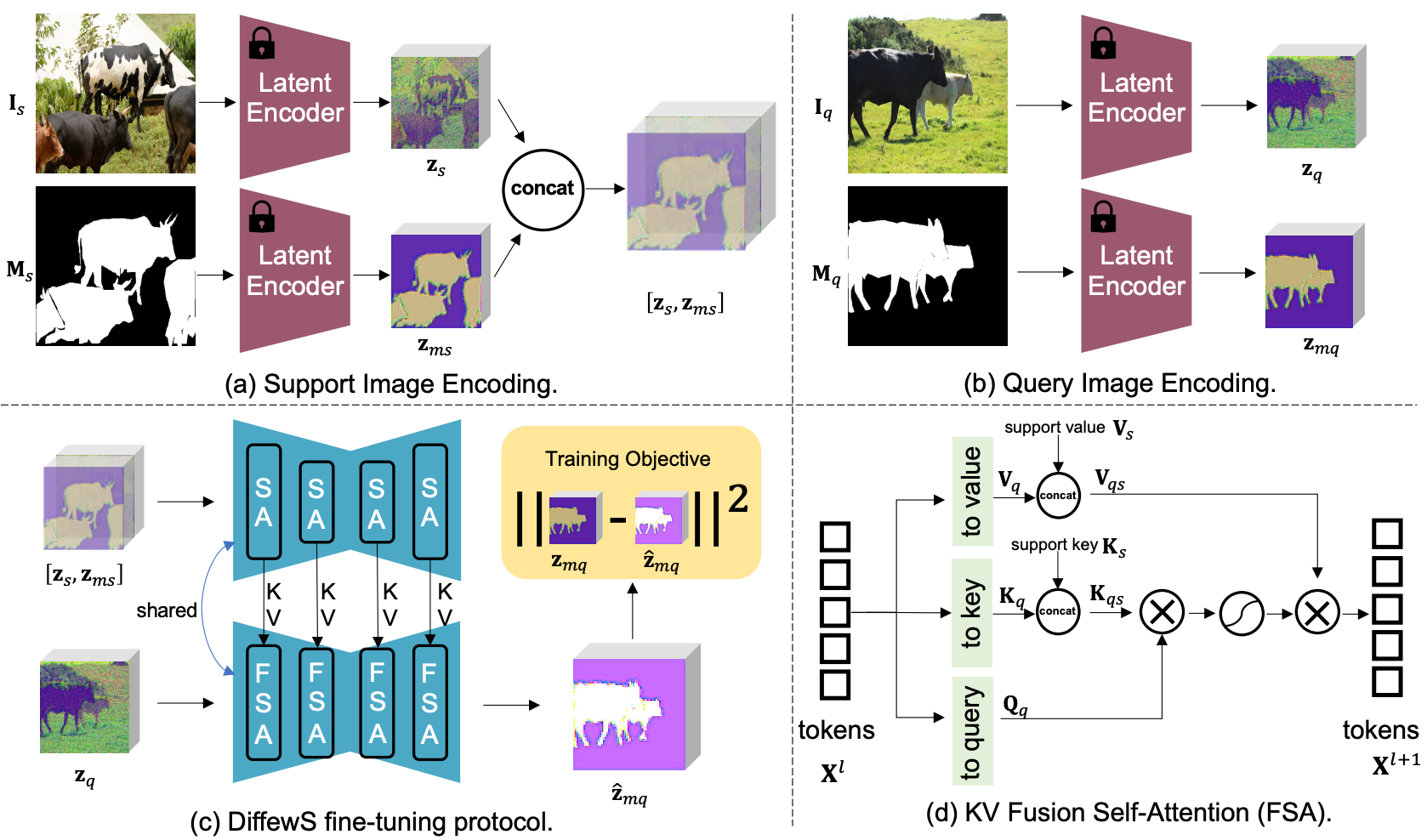

Unleashing the potential of the diffusion model in few-shot semantic segmentation

Muzhi Zhu*, Yang Liu*, Zekai Luo*, Chenchen Jing, Hao Chen†, Guangkai Xu, Xinlong Wang, Chunhua Shen NeurIPS, 2024 [PDF] [Code] DiffewS adapts a latent diffusion model to few-shot segmentation by fusing support and query features and predicting masks directly, making diffusion priors useful for segmenting new categories from limited examples.

|

ICRA 2024

ICRA 2024

|

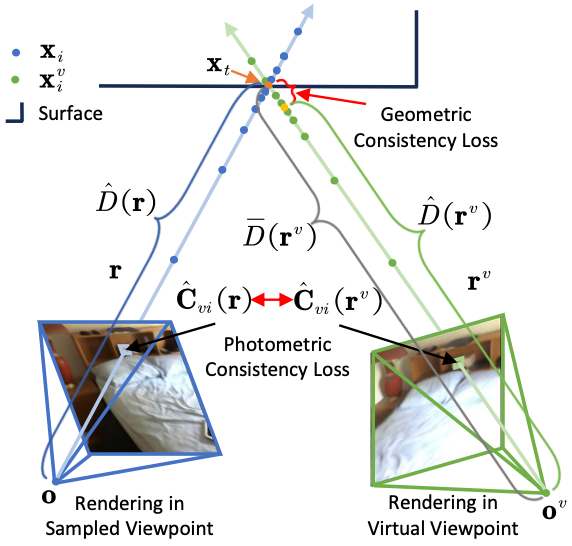

Improving neural indoor surface reconstruction with mask-guided adaptive consistency constraints

Xinyi Yu, Liqin Lu, Jintao Rong, Guangkai Xu†, Linlin Ou ICRA, 2024 [PDF] Rather than treating all rays equally, the approach uses adaptive masks and virtual viewpoints to enforce cross-view consistency, which improves self-supervised indoor reconstruction from posed images.

|

CVPR Workshop 2023

CVPR Workshop 2023

|

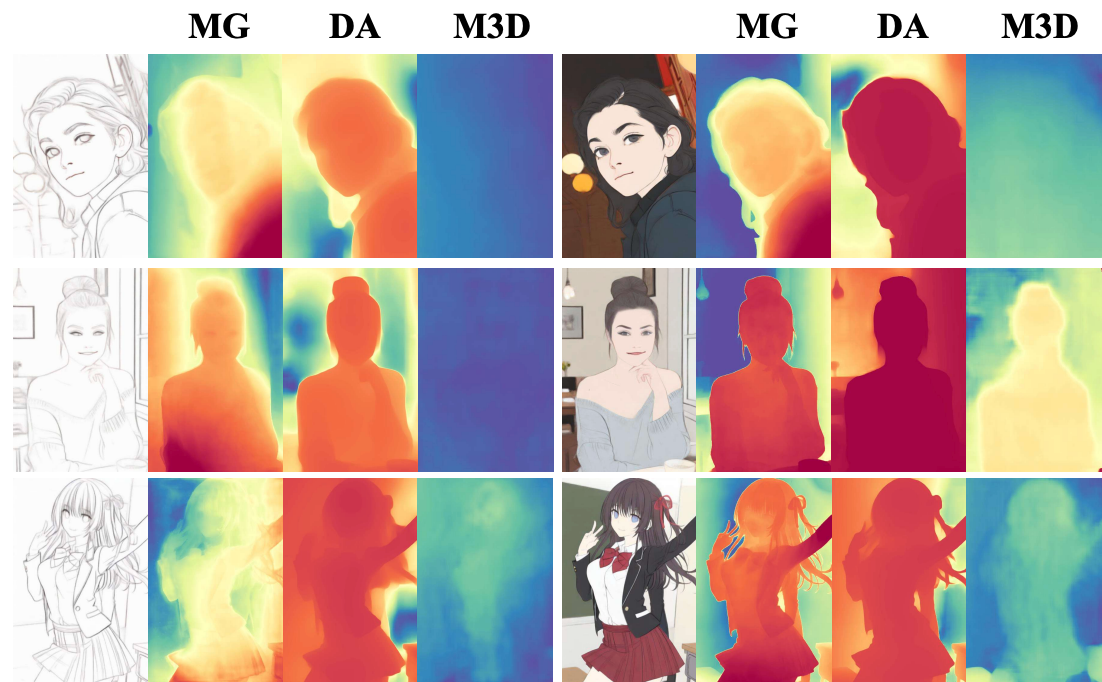

The second monocular depth estimation challenge

CVPR Workshop, 2023 [PDF] [Homepage] We achieved 1st place in the CVPR 2023 Monocular Depth Estimation Challenge.

|

arXiv 2024

arXiv 2024

|

Geobench: Benchmarking and analyzing monocular geometry estimation models

Yongtao Ge, Guangkai Xu, Zhiyue Zhao, Libo Sun, Zheng Huang, Yanlong Sun, Hao Chen, Chunhua Shen† arXiv, 2024 [PDF] [Code] A unified benchmark and codebase compares monocular depth and normal estimators under matched settings, making it easier to separate gains from model design, training data, and evaluation choices.

|

arXiv 2022

arXiv 2022

|

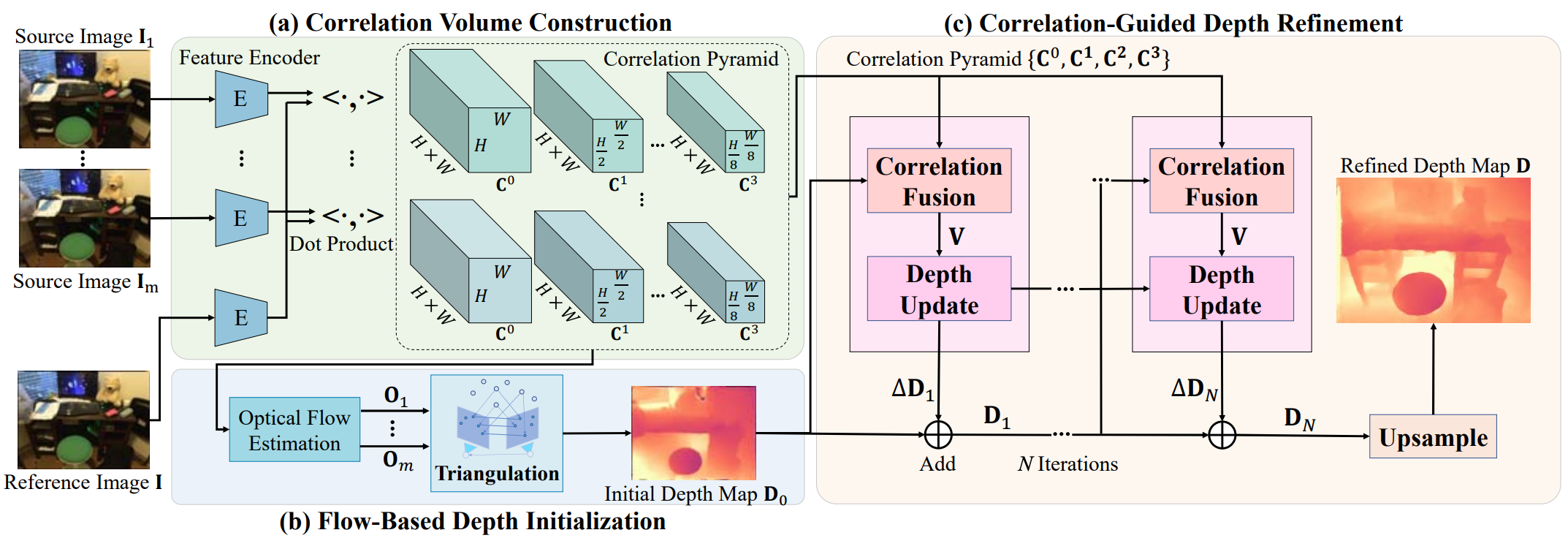

Exploiting correspondences with all-pairs correlations for multi-view depth estimation

Kai Cheng, Hao Chen, Wei Yin, Guangkai Xu, Xuejin Chen† arXiv, 2022 [PDF] All-pairs correlations and iterative refinement let the system recover fine structure and sharper boundaries in multi-view depth maps without relying on predefined cost-volume depth bins.

|

Academic Service

- Conference Reviewer: CVPR, ICCV, ECCV, ICLR, AAAI.

- Journal Reviewer: Artificial Intelligence (AI).